Creating custom Linux solutions

(Compiled from r369)

Copyright © 2002, 2003, 2004, 2005, 2006, 2007 René Rebe, Susanne Klaus

This work is licensed under the Open Publication License, v1.0, including license option B: Distribution of the work or derivative of the work in any standard (paper) book form for commercial purposes is prohibited unless prior permission is obtained from the copyright holder. The latest version of the Open Publication License is presently available at http://www.opencontent.org/openpub/.

(TBA)

Table of Contents

- Preface

- 1. Introduction

- 2. Basic Concepts

- 3. Hardware and Kernel Support

- 4. Building T2

- 5. Inside T2

- A Package

- Description File (.desc)

- Configuration File (.conf)

- Patch Files (.patch)

- Cache File (.cache)

- Package Creation in Practice

- Preparing

- Custom Code

- Testing

- Getting New Packages Into T2

- Compiler Optimisations

- The Automated Package Build

- Working with Variables

- Build System Hooks

- Command Wrapper

- Hacking with Bash

- Bash Version

- General

- Introduction

- How to Watch the Value of a Variable While Running a Script

- How to Interrupt Scripts Based on Conditions

- Exceptions

- How to Skip Part of a Script While Testing

- Convenient Variables

- 6. T2 Target Development

- 7. Installation

- 8. Configuration

- Boot Loader

- LILO

- GRUB

- Yaboot

- SILO

- ABoot

- I have no root and I want to scream

- Linux Kernel

- Managing Filesystems and Files

- U/dev

- Hotplug Hardware Configuration

- Initrd

- Network Configuration

- Configuration File

- Keywords Recognized by the Basic Module

- Keywords Recognized by the DHCP Module

- Keywords Recognized by the DNS Module

- Keywords Recognized by the Iproute2 Module

- Keywords Recognized by the Wireless-tools Module

- Keywords Recognized by the Iptables Module

- Keywords Recognized by the PPP Module

- Profiles

- Configuration Examples

- Command-line Tools

- Tricking With Pseudo Interfaces

- Compatibility

- NFS (Network File System)

- CFEngine - a Configuration Engine

- A. Partitioning Examples

- B. Serial Console

- C. Copyright

- Index

List of Figures

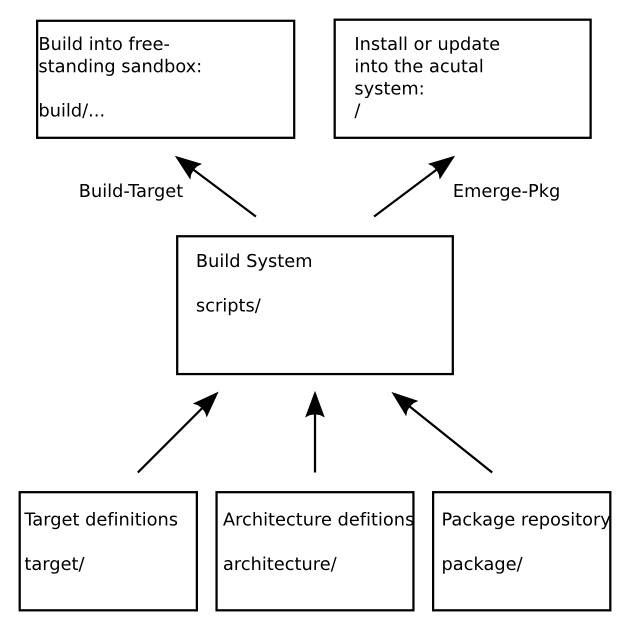

- 2.1. T2 basic overview

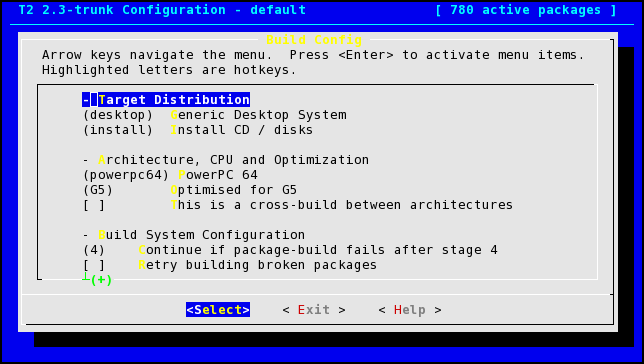

- 4.1. T2 config screenshot

List of Tables

Table of Contents

This book is written to document the 7.0 series of the T2 System Development Environment (SDE), but is intended to be useful for reference with earlier versions such as 6.0 and 2.1. Where incompatible changes have been implemented we tried to outline them.

This book is written for computer-literate folk who want to use T2 as operating system or system development environment for custom Linux appliances - such as: router, firewall, network attached storage (NAS), desktop, server or embedded boards and products.

This book aims to be useful to people of widely different backgrounds—from people who only intend to install final products based on T2, up to experienced system administrators, system integrators and developers who need an inside into every detail of the T2 environment. Depending on your own background, certain chapters may be more or less important: While administrators and developers might be most interested in quickly getting to the details in Chapter 4, Building T2 and Chapter 5, Inside T2, end-users can rather skip over the T2 internals and start reading Chapter 7, Installation and Chapter 8, Configuration.

This section covers the various conventions used in this book.

- Constant width

Used for commands, command output, and switches

Constant width italicUsed for replaceable items in code and text

ItalicUsed for file and directory names

Note

This icon designates a note relating to the surrounding text.

Tip

This icon designates a helpful tip relating to the surrounding text.

Warning

This icon designates a warning relating to the surrounding text.

Note that the source code examples are just that—examples. While they should compile with the proper compiler incantations, they are intended to illustrate the problem at hand, not necessarily serve as examples of good programming style.

The chapters that follow and their contents are listed here:

- Chapter 1, Introduction

Is a short introduction to T2.

- Chapter 2, Basic Concepts

Covers the history of the T2 SDE as well as its features, architecture, components - a general overview and why it is different.

- Chapter 3, Hardware and Kernel Support

Is a short overview about the hardware architectures as well as the Operating Systems so far support by T2.

- Chapter 4, Building T2

Outlines how to build your own flavour of T2 from the provided source code repository.

- Chapter 5, Inside T2

Describes the internals of T2, the package descriptions, the build system, the configuration and all the details from a developer's point of view. It further demonstrates how to create new package as well as the automated build process in great detail. Followed by in-depth descriptions of the hooks and variables available to take over control.

- Chapter 6, T2 Target Development

Covers how to create custom targets, that is Linux solutions and appliances with T2. How to control all the details and permanently keep the configuration, cleanly separated from the remaining T2 source tree.

- Chapter 7, Installation

Walks through a classic, textual installation of an installable end-user T2 flavour as well as the LiveCD and gives some specific hints for embedded boards and the Wrt2 wireless router.

- Chapter 8, Configuration

Discusses the various configuration details, from CPU architecture dedicated boot loaders and their specific configuration settings over users and permissions to other every day preferences and tweaks.

- Appendix A, Partitioning Examples

Describes the details of platform dependant partitioning and the related software tools.

- Appendix B, Serial Console

Discusses how to use a serial console for headless servers and embedded boards (or just for debugging).

This book started out as bits of documentation written by René Rebe, which were then gradually updated and enhanced according to the changes and progress made in T2 as well as user and developer feedback. As such, it has always been under a free license. (See Appendix C, Copyright.) In fact, the book was written in the public eye, as a part of T2. This means two things:

You will always find the latest version of this book in the book's own Subversion repository.

You can distribute and make changes to this book however you wish—its under a free license. Of course, rather than distribute your own private version of this book, we'd much rather you send feedback and patches to the Subversion developer community. See ??? to learn about joining this community.

A relatively recent online version of this book can be found at http://www.t2-project.org/documentation/.

This book would not be possible - nor very useful - if T2 did not exist. For that, the authors would like to thank Clifford Wolf for starting ROCK Linux and all the countless people who contributed to it and T2 over the last decade.

We also would like to thank all who contributed to this book with informal reviews, suggestions, and fixes: While this is undoubtedly not a complete list, this book would be incomplete and incorrect without the help of: Lars Kuhtz, Valentin Ziegler, Urs Pfister, and the entire T2 community.

Special thanks to Ben Collins-Sussman, Brian W. Fitzpatrick, and C. Michael Pilato for their outstanding XML Docbook setup as created for the Subversion book, which is also used to transform this book into the final PDF, PostScript(tm) and HTML form.

We hope you find this book a joyful read. Joy and collaboration is what made GNU/Linux great and it is in that spirit T2 has been created and evolves!

Table of Contents

T2 is not yet another Linux distribution: It is a flexible System Development Environment allowing not only the automated rebuild to create a new version or to optimize for the target CPU in use, it even allows the creation of adapted distributions. The level of adaptation reaches from simple package selection (e.g. KDE, GNOME, Xfce, Apache, Samba, etc.), special purpose patches (e.g. special features for GUI applications, specially patched kernels, ...), custom output format (such as Live-CD or ROM image for embedded systems) to tight integration with 3rd party administration, management, networking or other software. In summary T2 is an integrated development environment for custom distributions.

The possible application range includes normal servers, desktop systems, specialized firewalls, routers or Network Attached Storage solutions and all kinds of embedded devices.

The T2 framework includes the automated build system, architecture and target descriptions, as well rich set or packages descriptions - currently already over 2800! These package descriptions only contain parameters needed to build a package and information for the end-user installer. Additionally the T2 packages are left unmodified wherever possible. So that by default the packages behave in the way as intended by their upstream authors. Patches are only included if needed for clean compilation (e.g. cross compilation) or bug and security fixes - not the intrusive set of patches most often included in other commercial distributions.

T2 is a fork from ROCK Linux[1]which in turn is not based on another distribution - it was developed from scratch in 1998 by Clifford Wolf and many other contributors.

The name 'T2' started as an intern project name for "try two, second try" and "technology (level) two" and was not intended as final project name. However, T2 became too popular within the first months and thus the name was not easy to change anymore and eventually became the official project name.

At this point we would like to point out that T2 must not be hard to install. While there is indeed a classic installer option which includes a text mode installer where one need to be a fairly experienced Linux user, more user-friendly options, such as a LiveCD (DVD, USB stick, ...) option, exists as well. Additionally a T2 target can add its own startup and install setups and thus innovate in this area.

However many users and administrators still prefer the classic textual install as it offers a great deal of flexibility and control. For example all the services have to be turned on by hand and you have to be able to understand most configuration files from the original packages. T2 does not contain an intrusive set of system administration utilities - however the basic system configuration is provided by our setup tool STONE. Powerful and yet complex tools need to be set up correctly - and colorful setup tools hide the fact that the administrator needs to be informed about the configuration details. Those automated everything tools are one of the reasons for all the SPAM and worms flooding the Internet. So think again if you see that as a problem - it also teaches you how to do things right.

Note

To Linux newbies: Building a server configuration with T2 can involve a steep learning curve. Nevertheless it is a very rewarding experience and after some months you will realise you will never look back. Make sure you have time to dive in and understand what it is all about. Linux is one of the best documented operating systems in the world! Make sure to tap those resources.

But because of its clean structure and packages T2 Linux is a very good Linux edition for system administrators and end-users. T2 Linux was developed from scratch and is maintained by a collaborative group of people.

T2 is cross-architecture.

Portability is a great advantage of T2. It is possible to cross-compile easily or add new architectures in a short time. Currently there is support for Alpha, ARM, AVR32, Blackfin, CRIS, HPPA, HPPA64, IA64, MIPS, MIPS64, Motorola 68000 (68k), PowerPC, PowerPC-64, SPARC, SPARC64 (UltraSPARC), SuperH, x86, and x86-64.

T2 is cross-platform.

Due to the nature of the automated build system and clean, parameterized packages it is easy to exchange the Linux kernel with Hurd, Minix, a BSD, Haiku, OpenSolaris or OpenDarwin to build a complete non-Linux platform. Work to support Minix and OpenBSD has begun, however this still lacks more volunteers.

Another way to utilize T2 is to build single packages into foreign binary-only systems. Systems where the kernel or user interface sources are not open, such as Apple's Mac OS X or Microsoft's Windows. T2 could be used as add-on manager for open source packages.

T2 aims to use as few patches on packages as possible.

T2 is different, it takes the form of a group of scripts for building and installing the distributions. One of the basic assumptions is that packages should be installed following the standards of their creators. This contrasts with the patched up systems created by most other distributions. T2 only patches when absolutely necessary: compile, security and bug fixes only.

T2 contains the latest versions of packages.

One great aspect of T2 is that the package configurations usually point to the latest packages and so also one of the latest kernels.

One great benefit of Open Source software is that software gets updated often. With T2 you get a tool to update your entire distribution often - instead of regularly updating the kernel and packages by hand. Together with a tool like Cfengine (GNU Configuration Engine , see \cite{CFENGINE}) you get an environment which can be updated easily. In fact T2 users tend to run really up-to-date systems.

T2 is completely self-hosting.

It has been proven that the enormous utilization of core applications within a complete T2 Linux build is a really good stress-test for these packages and helps to find bugs, reaching from very trivial to the very intricate.

T2 is complete.

Although minimal T2 distribution can be rather small (less than 10 MB for an embedded firewall) you can already get a compact functional system from the minimal package selection template. Nevertheless, T2 includes a big package repository with a wide-range of application areas: X.org, KDE, GNOME, Xfce, Apache, Samba, Suid, and multiple thousands more. Among those are also quite a exceptional selection of special purpose, minimal embedded packages such as dietlibc, uclibc, busybox, embutils, dropbear and many others not commonly found in other systems.

Extending T2 is easy.

In the case you want to add a package to T2 you normally only need to fill some textual meta information. All packages get downloaded from the original locations. For each package T2 just maintains meta-data.

The build-system builds most package-types automatically and modifying a package build, or even replacing its build completely for a new target, can be achieved within the target.

Scripting is power.

Because of the fact that T2 consists of shell scripts it is easy to see what is happening under the hood. Also it gives you the possibility to change the automatisms according to your own needs. The included targets are just examples of such adaptations and contrasts to e.g. RedHat's policy not to support any automated RPM rebuilds (in addition to the often not-compileable sRPMs).

In fact the system has proves to be very powerful just because of the scripting system.

T2 is the ultimate do-it-yourself distribution.

The build and install system is easily accessible and modified to one's own needs. Furthermore the direction T2 development has taken emphasises this particular strength of the distribution.

There is no other SDE

There is no other SDE where you can define a target, with package selection, package modifications, optimization settings and cross-compile builds in such flexible and complete ways.

T2 adheres to standards.

T2 gets as close to standards as it can. But with a pragmatic view. For example it uses the FHS (File Hierarchy Standard) and LSB (Linux Standard Base), but with exceptions where impracticable.

T2 makes no assumptions about the past.

If you have been using one of the major distributions like SuSE or RedHat you'll realise a lot of items have been patched in. This increases the dependency on those distributions (intended or not). It is hard for the larger distributions to revert on this practise because of backward compatibility issues (when upgrading). T2 will patch some sources (for example when a package does not comply with the FHS), but leaves it to an absolute minimum - for example no added features or branding.

The same philosophy applies to T2 itself. T2, and its package system, have gone through several redesign phases and little consideration is given toward backward compatibility. This may be inconvenient, but the fact is that every incarnation of T2 is cleaner, yet more powerful than its predecessor.

T2 is built optimally.

With T2 all packages are built with the optimisations you want and the target platform. Other distributions usually build for generic i386 or Pentium. With T2 you can automatically build Linux, glibc, X.org, KDE, GNOME and the other CPU intensive packages - yes the whole distribution! - optimized for your CPU.

T2 uses few resources.

T2 build and installation scripts are Bash scripts. Due to the optimization for a given CPU, the non-X11 based installation and setup as well as the lightweight init scripts you can save many resources on old computers. Additionally space optimized alternative C libraries such as dietlibc and uclibc as well as minimal add on tools such as busybox and embutils can reduce resource use drastically. Also available are more lightweight choices in various other areas from Xfce to lightweight http servers, init systems, ...

Services have to be turned on explicitly

Also packages and services have to be turned on explicitly, manually. When you boot a fresh T2 installation you'll find the minimum of services configured to be active.

From the system administrator's perspective this is ideal for new installations. It compares favourably with cleaning up and closing all services of a bloated commercial distribution.

T2 is ready to burn on a CDROM.

After building T2 from sources you can burn the (target) T2 distribution directly onto CDROM for installation on other machines (and pass it on to other people).

T2 can easily be installed over a network.

The installation process is terminal based. Installing remotely and configuring the new system over a network connection is not uncommon.

There is no other distribution like T2 Linux.

By now it should be clear T2 does not look like any of the other distributions. Debian, while also a collaborative effort, is a great distribution but is in many ways more like the commercial editions. The BSD variants come closer.

The only one that has come close recently are OpenEmbedded and Gentoo. It is interesting to see where these build-it-yourself (meta) distributions compare and differ to the System Develoment Environment T2:

Table 1.1. T2 SDE, OpenEmbedded and Gentoo compared

| T2 | OpenEmbedded | Gentoo |

|---|---|---|

| Bash based | proprietary BitBake based | Python / Bash based |

| chroot build | ??? | chroot build |

| developer and end-user oriented | developer oriented | end-user oriented |

| fully automatic package build type detection | BitBake recipes with inheritance | each package needs a full ebuild script |

| autodetected dependencies | manually hardcoded dependencies (many monkeys method) | manually hardcoded dependencies (many monkeys method) |

| sophisticated wrappers | ??? | hardcoded e.g. CFLAGS |

| installable, live, and firmware ROM images | firmware ROM images | rebuild on each system, LiveCD creation as add-on scripts (Catalyst) |

| multiple alternative C libraries | multiple alternative C libraries | just the single packages, no whole-build support |

| multiple init systems | ??? | just the single packages, no sophisticated pre-configuration |

| cross build of X.org and more | cross build of some (how many ???) | no integrated cross build support, seperate Embedded Gentoo effort |

| cluster build, distcc, icecream, ... | ??? | - |

| separated target config and custom file overlay | seperated target config, likewise | emerge, edit compile on each system |

| thousands of packages | mostly embedded package subset, not all desktop and server packages | more and more packages get removed (into external overlays, such as E17 et al.) |

The biggest difference is that Gentoo does not include the facility to predefine targets and their custom setup permanently. Instead on Gentoo everyone re-emerges all the packages via pre-built binaires or source code on each system. Inconvienient for developpers is, that Gentoo does not include an auto-build-system like T2 does. Each package needs a full .ebuild script where even the tar-balls extraction is triggered manually - and often even the resulting files are copied by hand. Gentoo and OpenEmbedded also are based on hardcoded dependencies - in contrast T2 determines them automatically and stores them into a cache.

The biggest difference to OpenEmbedded is that T2 uses normal shell scripts to implement the build system, while OpenEmbedded bases on a properitary BitBake tool and associated meta-data that must be explicitly installed and is yet a properitary format to learn. Getting started as well as the learning curve is way easier in the T2 world and thus it is most comfortable to use T2 as a foundation for building your own (specialized) distribution. It is therefore we call it a System Development Environment, abbreviated to: SDE. Also the T2 build system is faster and supports distributed cluster builds (via distcc and icecream) and compiler caching (via ccache). You can find more information about these tools at http://distcc.samba.org/, http://en.opensuse.org/Icecream and http://ccache.samba.org.

It has been stated T2 Linux is more BSD with a GNU/Linux core than anything else: T2 has a ports collection (called package repositories), a make world (called scripts/Build-Target) and like OpenBSD prefers the disabled feature by default method. This may make OpenBSD T2's closest relative.

T2 has a mailing list and an archive thereof. It is a good idea to subscribe and to voice your ideas and amendments. Not like Groucho:

It is better to remain silent and be thought a fool, than to open your mouth and remove all doubt.

Often there is discussion on IRC:

irc.freenode.net channel #t2

You can find a threaded digest of the mailing list at http://www.t2-project.org/contact/.

Finally the T2 SDE website contains a nice search facility which can be really helpful. In fact, before sending a mail to the mailing list, take a quick look first. It is likely someone has had the same problem before.

[1] The fork happened due to major technical disagreements in the ROCK Linux 2.1 development series, as well as ongoing communication problems, disagreements in project management, openness and Linux conference presentations.

Table of Contents

It was not that easy to develop a whole (Linux) system from scratch, especially as years ago all the many details where not as documented as they are today. Bootstrapping a complete system from scratch required in-depth knowledge about the kernel, compiler and system libraries and to solve some obstacles in order to get all the toolchain and library parts correctly built together to form a functional system. That is also why most of the many Linux flavours build upon existing Debian, RedHat or SuSE distributions - just extracting the pre-built binaries and injecting a few custom files. T2 on the other hand is among the few designed from the ground up to define a system without historic cruft with the additional bonus of the automated build system to inject custom modifications as needed.

Designing a self-hosting system, one that can be used to re-build itself, is the next technical challenge.

The T2 SDE is booth, designed from the grounds up with an infrastructure optimized for (cross-)compiling from package sources - as automated as possible - as well as selfhosting.

Vast amount of work was put into setting the whole infrastructure up for cross compiling to all the different CPU architectures supported.

The T2 SDE automatic build system is written in form of shell scripts. The main components with additional tools can be found in the directory scripts/.

It is responsible for parsing package meta-data and config files, creating the T2 sandbox environment with its file modification tracking functionality and command wrappers, packaging the result and all the other needed actions between the steps in the build chain. It is also able to perform dependency analysis and includes the menu-driven configuration program to configure the build.

Usually there is one script for a specific function, for example: scripts/Config, Download, Build-Target, Create-ISO among others. Some scripts are also re-used internally, for example scripts/Download is invoked automatically on-demand, to fetch the packages sources when they are required.

T2 build scripts are all run from the T2 top-level directory, like scripts/Config and scripts/Build-Target and parse and process the various meta information from the respective directories such as package/, architecture/ and target/

The package meta-data is defined by key-value pairs in various package repositories located in a sub-directory named package/ sorted into categories like package/base, package/network, package/x11, and so on. For each package a Text/Plain .desc file contains the original author, the T2 maintainer, license, version, status, download location with checksum, textual information for the end-user and architecture limitation as well as additional optional tags (see the section called “Description File (.desc)”). Patches needed to compile the package or to fix security or usability bugs can simply be placed in the package directory.

While the automated build system iterates over all packages to be built, it parses the package's meta-data and extracts the source tar-balls, applies all the patches and detects the build type of the source automatically (e.g. configure make, xmkmf, plain make or variations - see the section called “The Automated Package Build”) and builds the package with generated default options. For packages where modifications in the process are appropriate a .conf file for the package can modify variables, add commands to predefined hooks or replace the whole process. An automatically generated .cache file holds generated dependency information as well as reference build-time, package file count and the package size.

Additionally CPU architecture specifica is located in the architecture/ directory, including optimization options, patches or other required quirks.

The target configuration, patches and other payload resists in targets/.

Before T2 2.0 "sub-distributions" where extracted out of a fully built distribution as a sub-set. This had the drawback that the first build needed to build all packages needed by the sub-distributions and that sub-distributions only had the possibility to modify the packages to a minimal degree since this had to happen after the normal package build.

With T2 2.0 "targets" where introduced as a smarter way of dealing with specialization: A target limits its package selection and thus download as well as build to what is really required. Furthermore it and can modify any aspect of a package build, apply custom feature or branding patches and even replace the logic to build a package or final image creation process completely.

T2 is under continuous development, with the development work beeing done in the version control system - currently Suversion. With a version as target milestone, for example 7.0, 8.0, ... When most development goals are archived, this trunk is branched and this version prepared for the release. In parallel development continuous in the trunk with the next milestone version, 8.0 in this case. So each version milestone has a release series in the end of the development cycle. Though the development version from the trunk usually work well enough to download and build a common Linux system, there is no guarantee that all architectures, C libraries, packages and target configurations build perfectly at any given time.

The packages of the development trunk tend to be more up to date than the stable ones, as the branches are usually maintained with API and ABI compatibility in mind and mostly include security and compile fixes only. Sometimes the development edition will be in research mode adapting the latest kernel, C library, compiler or CPU architecture combination or rearranging the build scripts for new features, concepts and cleanups.

If you are a normal user or administrator of production machines you should choose to use the latest stable branch. The development tree is usually used by developers, while users and administrators often only try the trunk when it approaches the next stable release.

Table of Contents

In the beginning T2 was created to compile i386 GNU/Linux specifically optimized for a given x86 CPU such as Intel's Pentium or AMD's K6 to benefit as much of latest instruction extensions the processor manufacturers integrated into their CPUs.

As this goal was reached quickly - additional, more powerful but also, at that time, harder to get architectures where targeted: Alpha, PowerPC and SPARC. Later on UltraSPARC, MIPS, MIPS64, HPPA eventually where adapted as their price dropped into reasonable margins. Due to the flexibility of the scripted and open build process IA-64 as well as x86-64 could be supported immediately when they where introduced by their manufacturers. Just the toolchain support patches had to be pulled in and all the packages could be cross-compiled automatically.

Supporting the whole range of personal computer and workstation platforms was just the beginning and the developers set on to support the various CPUs used in embedded systems such as: ARM, Motorola 68000, SuperH and the newer: AVR32, Blackfin and Cris CPUs.

As some of the CPUs do not come with a Memory Management Unit (MMU), support for the modified Linux variant named uClinux supporting MMU-less architectures was added.

Additionally add-on kernel patches such as RTAI for real-time capability can optionally be enabled to be applied.

With all the many volunteers and employees working and contributing to Linux world-wide Linux is one of the most flexible kernel to choose. Even in absence of a MMU it is capable of running on a wide range of CPUs, supporting real-time requirements and allows choosing from a great pool of different CPU architectures to match exactly the performance and power consumption requirements set by the target system specification.

Despite Linux' strong support for various CPU architectures, including MMU-less systems and real-time capabilities, as monolithic design Linux is still kind of vulnerable at least in regard of programming mistakes. For example dereferencing bad pointers, out of bounds array access are able to crash the whole kernel. Imperfect loop conditions, e.g. in combination of unexpected hardware conditions can also result in infinite loops and thus stall the whole system.

This is where Minix as an open source implementation of micro-kernel shines with self healing capabilities such as reloading crashed or unresponsive driver processes.

However at the time of writing Minix only supports x86 CPUs, while ports to PowerPC and ARM are in progress. The minimal size of the bare microsystem kernel however could potentially decrease the amount of work required for new architecture ports as well as the isolated driver process model decrease the edit-compile cycle during device driver development.

Implementing support for other, open source operating systems inside the T2 SDE is not that hard at all. As many of the included packages are either plain ANSI C or C++ code that compile on any system anyway, or the platform dependant bits are already ported to the vast majority of systems adding a new OS kernel to T2 comes down to:

adding a suitable "kernel header" package

adding the matching C library)

adding the matching C compiler (if any other than GCC)

adding the kernel packages itself

adding some definitions such as registration of the kernel name to T2

adding other custom packages, e.g. for network and firewall utilities, graphic subsystem packages et al.

Table of Contents

First thing to do is to choose one of the T2 Linux versions and download it from http://www.t2-project.org/ (see also ???). A tarball of the distribution scripts is only a few MB in size and downloads quickly.

T2's stable versions go hand-in-hand with stable Linux kernel versions. Nevertheless most T2 users use the development versions of T2 Linux anyway . One addicting feature of T2 is that you get the latest version of every package, including the kernel. The development versions of T2 have proved to be pretty robust. Nevertheless a development version can potentially be broken.

Untar the file and it unpacks documentation, build scripts and the directories with package definitions.

The size of T2 Linux is so small because it is only contains the build scripts and meta information for downloading the kernel and packages from the Internet. The rest of the distribution gets downloaded in the next phase.

Once you have unpacked the distribution you can see directories like:

Documentation - includes the INSTALL and FAQ documents

architecture - contains the definitions particular to a platform

download - where the downloaded tar files will be stored

misc - miscellaneous files including the source of internal helper applications

package - contains the definitions for downloading and installing packages grouped into several repositories

scripts - contains the configuration, download and build scripts

target - contains the target definitions

In former (CVS) times the T2 source tree was kept in sync using rsync. However nowadays, with powerful version control systems being invented in the meantime, T2 the sources should be accessed using them. The drawback is that you can not get updates with an extracted tar-ball as base - you need to checkout the sources via the version control system. Currently all source trees of T2 are hosted inside a Subversion repository. The command to get the latest sources via Subversion is:

svn co http://svn.exactcode.de/t2/trunk t2-trunk

You even have the choice to use a read-only replicated server:

svn co http://svn2.exactcode.de/t2/trunk t2-trunk

Alternatively you can use the svn:// protocol, it usually offer a performance gain and if you have an account with write access, or plan to contribute a lot of changes back the https:// protocol must be used, as write access is only offered thru an encrypted channel.

To access a stable tree in favor of the development trunk, just replace trunk with branches/6.0 or an equivalent. You can even checkout tagged released by using tags/6.0.0 - just substitute the version you need.

When you already use Subversion to obtain the source you also have the possibility to switch to another branch or tag while just receiving the changes using the svn switch command.

Let us suppose you have checked out the development trunk but decide you need the controlled stability of the stable trunk: The associated svn command to execute would be:

svn switch http://svn.exactcode.de/t2/branches/6.0

To switch to a specific tagged version you need:

svn switch http://svn.exactcode.de/t2/tags/6.0.3

This section discusses the T2 build system as it has been implemented since version 2.0 and we assume that you want to build an end-users boot-able and install-able target like the generic, minimal, desktop or server target.

T2 requires just a few prerequisites for building:

a Bash shell: as the T2 scripts take advantage of some convenient Bash specific features and shortcuts

ncurses: for the scripts/Config tool

cat, cut, sed & co: for the usual shell scripting

The build scripts will check for the most important tools and print a diagnostic if a tool or feature is found missing.

More information can be found in the trouble-shooting section (see the section called “Troubleshooting”) and kernel configuration (see the section called “Linux Kernel”).

T2 comes with a menu driven configuration utility even for the build configuration:

scripts/Config

which compiles the configuration program (you need gcc installed for this).

The most important option (ok - well maybe the most important after the architecture for the build ...) is to choose a so-called 'target'.

Relevant targets are:

Generic System

Generic Embedded

Exact Desktop System

Archivista (Scan-)Box

Sega Dreamcast Console

Psion Windermere PDA

Reference-Build for creating *.cache files

Wireless Router (experimental!)

Most of the targets have a customization option. The "Generic System" customization looks like this:

No package preselection template

Minimalistic package selection

Minimalistic package selection and X.org

Minimalistic package selection, X.org and basic desktop

A base selection for benchmarking purpose

Also depending on the target type the distribution media can be selected. For the "Generic System":

Install CD / disk

Live CD

Just package and create isofs.txt

Distribute package only

If you want to alter the package selection (performed by the target) this is finally possibly at this stage, under the expert menu. The deselected packages are also not downloaded from the Internet.

So now we start configuring the target that will build the packages for the system:

scripts/Config

The most useful options in scripts/Config are:

Always abort if package-build fails: Deselect normally, so your build continues (there are always failing packages.

Retry building broken packages: When starting a rebuild (after fixing a package) the build retries the packages it could not build before.

Disable packages which are marked as broken: When checked T2 will not try to build the packages that have errors (as noted in the cache file see the section called “Cache File (.cache)”)

Always clean up src dirs (even on pkg fail): Select when you need the disk space - though it can be useful to have the src dir when you need to troubleshoot.

Print Build-Output to terminal when building: Select for exiting output.

Make rebuild stage (stage 9): Select only if you have too much CPU power or are paranoid to solve rare dependency problems between packages. The downside is a doubled total build time - and should normally not be necessary.

Now the auto-build-system has a config to use and can so determine which packages are requested to be downloaded and build. The scripts/Download contains the functionality to perform the downloads - and when started with the -required option it only fetches the sources needed by the given config.

scripts/Download -required

Downloads the selected packages with which you can build a running system. See the section called “Download Tips” for some tips.

Downloading the full sources for the various packages used may takes a while - depending on how fast you can move up to 2Gb through your Internet connection. While waiting you may want to go out, sleep, work and go through that routine again.

The Download script may complain you have the wrong version of bash and you need curl.

The full options of scripts/Download are [2]:

Usage:

scripts/Download [options] [ Package(s) ]

scripts/Download [options] [ Desc file(s) ]

scripts/Download [options] -repository Repositories

scripts/Download [options] { -all | -required }

Where [options] is an alias for:

[ -cfg <config> ] [ -nock ] [ -alt-dir <AlternativeDirectory> ]

[ -mirror <URL> | -check ] [ -try-questionable ] [ -notimeout ]

[ -longtimeout ] [ -curl-opt <curl-option>[:<curl-option>[:..]] ]

[ -proxy <server>[:<port>] ] [ -proxy-auth <username>[:<password>] ]

[ -copy ] [ -move ]

On default, this script auto-detects the best T2 SDE mirror.

Mirrors can also be a local directories in the form of 'file:///<dir>'.

scripts/Download -mk-cksum Filename(s)

scripts/Download [ -list | -list-unknown | -list-missing | -list-cksums ]

Quite a few contributors and sponsors of T2 Linux have created mirrors for source tar balls - scripts/Download tries to select the fastest mirror.

Sometimes a mirror is incomplete or out-of-date. The Download scripts will try to fetch the file from the original upstream location in that case. If too many files are missing on the mirror that was selected, you might however like to choose another one.

To select a specific mirror:

scripts/Download -mirror http://www.ibiblio.org/pub/linux/t2/7.0

Running scripts/Download with the argument '-mirror none' will instruct the scripts to always fetch the sources from the original site, first.

All packages get downloaded from the original locations. For each package the T2 distribution maintains a package file which points to the ftp, http or cvs location and filename. Sometimes the download site changes, more often the version numbering of a file changes. At that point the package file needs to be edited. After a successful download you may send the patch to the T2 Linux maintainers - so others get an automatic update.

Missing packages are not necessarily a problem. If you are aware what a package does and you know you don't actually need it you can pretty much ignore the error. For base packages it is usually a good idea to fetch them all.

It may be useful to download the most recent version of T2 (stable or development) since it will reflect the most recent state of the original packages.

To actually find which files are missing run:

scripts/Download -list-missing

If a package fails to download because it is not on a mirror and the original has moved away you may want to fix the URL in the package directly.

Find the package in the package directory and modify the package.desc directly. Update the URL in the [D] field and set the checksum to 0. Also update the [V] version field if it has changed.

With the fixed URL re-run:

scripts/Download -required

or if there are some more files in the queue, downloading explicitly a single package can be forced with:

scripts/Download packagename

If it worked and the package builds fine (see Chapter 4, Building T2) it is recommended you send in the change to the T2 maintainers - to prevent other people from going through the same work (see the section called “Getting New Packages Into T2” for more information). The one thing you'll have to do is to fix the checksum in the [D] field (see the section called “Checksum”).

The Download script has a facility for checking the integrity of the downloaded packages. It does so during each download - but you can also run a check manually:

scripts/Download -check -all

iterates over all the packages and checks for matching checksums. If a package got corrupted, in one way or the other, it returns an error.

To get the checksum of a single tarball:

scripts/Download -mk-cksum path

Or use the even easier script which creates a checksum patch automatically:

scripts/Create-CkSumPatch package > cksum.patch patch -p1 < cksum.patch

in short just:

scripts/Create-CkSumPatch package | patch -p1

Before starting a new download it is an idea to create the 'download' directory and mount it from elsewhere (a symbolic link will not work with the build phase later, though in this stage it will do) this way you can use the same repository when moving to a new version of the T2 Linux scripts (check the section called “Unknown Files”).

When going through several cycles of upgrading and downloading packages (see also the section called “Download Tips”) you'll find the same packages with different versions of tarballs in your download tree (e.g. apache-1.25 and apache-1.26). The older files (apache-1.25) are considered obsolete and are named 'unknown files' in T2 jargon.

scripts/Download -list-unknown

To remove unknown files (treating it as white-space separated list and only pass thru column 2):

scripts/Download -list-unknown | cut -d ' ' -f 3 | xargs rm -vf

or since recently just:

scripts/Cleanup -download

To build the target you must be root. There are two main reasons why this is currently the case:

For normal builds with a native compilation phase chroot is used. However, the chroot system-call commonly is only allowed for the root user.

Various files require special permissions as well as probably specific owner and group settings for the resulting system work properly. However, those ownership and permission settings are only allowed to set for the root user.

To compensate for this, pre-load libraries, such as implemented with fakeroot, could be used to intercept and fake this meta-data while building, and this also is on the future T2 roadmap to integrate.

To build the target, execute as root:

scripts/Build-Target

which starts building all the stages (see the section called “Build Stages”). The output of the build can be found in build/$SDECFG_ID/TOOLCHAIN/logs/.

In the stable series this happens only rarely - but it might happen sometimes in the development series. Packages can break because they go out-of-date (dependencies do not match) or because the downloaded package mismatches the build scripts, or maybe, because there is something wrong with your build environment.

The output of the build process of the individual packages is captured in the directory build/$SDECFG_ID/var/adm/logs/. All the packages with errors show the .err extension.

A tabular overview is provided by scripts/Create-ErrList, like for example:

Error logs from live-7.0-trunk-desktop-x86-pentiumpro: [5] network/pilot-link [5] emulators/qemu 876 builds total, 874 completed fine, 2 with errors.

Check the content of the error files to see if you can fix the problem (see also the section called “Fixing Broken Packages”).

Before starting a rebuild select 'Retry Broken Packages' in scripts/Config.

Every package gets built in a directory named src.$pkg.$config.$id To remove these packages (if there is an error it can be useful to check the content of the partial build!) usescripts/Cleanup.

Warning

Simply type 'scripts/Cleanup' to remove the src.* directories. DO NOT REMOVE THEM BY HAND! This directories may contain bind mounts to the rest of the source tree and it is possible that you are going to remove everything T2 SDE base directory if you make a simple 'rm -rf' to remove them!

By passing --build the script will also remove existing builds from inside the build/ directory, --cache will ccache related cache directories from inside build/ and last but not least --full remove the entire generated content in the build/ directory.

Now you should have a ready system build. If you selected "Install CD / disks" or "Live CD" in the configuration phase then you have the Installer also. Since many different targets exist, those targets should share a common installer application. A typical installer needs different things like a embedded C library, many small size binaries and the installer binaries and scripts.

To summarize, a typical cycle to build a T2 target looks like:

scripts/Config scripts/Download scripts/Build-Target

Once your build completed you can turn it into an ISO image using the scripts/Create-ISO script. This ISO can be burned straight to a bootable CD-ROM or DVD, or instantly tested in a virtual machine, such as Qemu, Xen, Bochs, VirtualBox, or VMware. To create the ISO just run[3]:

scripts/Create-ISO mycdset

After burning the mycdset_*.iso files to the media the media number one is bootable.

Once comfortable with T2, power users often have more than one target to build, all build related scripts take a '-cfg' argument with a config name. By default the scripts use the config name 'default' if no '-cfg' argument is specified. The calling sequence to build a target named 'kiosk':

scripts/Config -cfg kiosk scripts/Download -cfg kiosk scripts/Build-Target -cfg kiosk

And Create-ISO accepts config names accordingly:

scripts/Create-ISO mycdset kiosk

Create-ISO allows to sort more than one build into the ISO files, for example to create mixed CPU architecture media or to add a rescue target along the main system:

scripts/Create-ISO mycdset kiosk rescue

Additional options include:

Usage: scripts/Create-ISO [ -size MB ] [ -source ] [ -mkdebug ] [ -nomd5 ]

ISO-Prefix [ Config [ .. ] ]

E.g.: scripts/Create-ISO mycdset system rescueIf you want to build and install a single package on your system, then the scripts/Emerge-Pkg script will download, build and install a package and all its dependencies. For example:

scripts/Emerge-Pkg packagename

Emerge-Pkg will also check if the dependencies are installed and up-to-date. However with T2 systems installed from older stable trees this can result in a vast amount of dependencies, including systems tools, scheduled to build which not always might be desired. To avoid this the dependencies to include can be narrowed down by just installing missing dependencies:

scripts/Emerge-Pkg -missing=only packagename

A complete repository, such as gnome2 or e17 (Enlightenment 17) can be built at once using the -repository option:

scripts/Emerge-Pkg -repository gnome2

Note

Emerge-Pkg with the -repository option does only build selected packages. This is, because some packages - like alternative ghostscript or java variants - are deselection (optional) by default and Emerging a whole mail or printing repository would mess up the existing packages and cause so called shared files.

Emerging a whole repository, the option controlling what to do with yet uninstalled, missing packages come handy, if you - for example - have already specifically installed some some packages out of a big repository and just want to update them:

scripts/Emerge-Pkg -missing=no -repository e17

If you know what you do, dependency resolution can be disabled entirely:

scripts/Emerge-Pkg -deps=none packagename

Further options for scripts/Emerge-Pkg are:

Usage: Emerge-Pkg [ -cfg <config> ] [ -dry-run ] [ -force ] [ -nobackup ]

[ -consider-chksum ] [ -norebuild ]

[ -deps=none|fast|indirect ] [ -missing=yes|no|only ] [ -download-only ]

[ -repository repository-name ] [ -system ] [ pkg-name(s) ]

pkg-name(s) are only optional if a repository is specified.

Internally the Emerge-Pkg script runs the scripts/Build-Pkg as backend, which can alternatively be run manually, likewise. However keep in mind that the Build-Pkg script does neither download the package files nor check for dependencies! So for example:

scripts/Download packagename scripts/Build-Pkg packagename

The results of the build can be found in /var/adm/log/9-packagename.log - or with the .err extension if it failed.

Usually you want to pass the option '-update' since this will backup modified configuration files and restore them after the package build finished.

Options for scripts/Build-Pkg are:

Usage: scripts/Build-Pkg [ -0 | -1 | -2 ... | -8 | -9 ]

[ -v ] [ -xtrace ] [ -chroot ]

[ -root { <rootdir> | auto } ]

[ -cfg <config> ] [ -update ]

[ -prefix <prefix-dir> ] [ -norebuild ]

[ -noclearsrc ]

[ -id <id> ] [ -debug ] pkg-name(s)

Both Emerge-Pkg as well as Build-Pkg will build and install a package into the root of the filesystem you initiate it in, that is it overwrites the ones currently installed.

Usually a shared object / linker problem of the freshly bootstrapped system or the resulting executables are for another CPU architecture. In the later case the "This is a cross build" option was not selected in the Config which disables the native build stage and cross builds as much as possible.

It could also be the case that the bash package is not present in the package selection, while the T2 scripts build scripts are executed in the chroot environment and require the bash.

In T2 we decided that any file can only be belong to a single package. If a second package overwrites or alters a file this is detected by the build system and complained about at the end of a package build.

Depending on the type of the file (executable or config file) this might be a more or less serious problem. Shared file errors can be ignored by the developer in the beginning of a new target development, but should be addressed properly sooner than later.

Possible solutions range from removing the package installing the duplicate file if they are alternatives for the same function, or renaming a file to not cause a collision.

Packages selected by default in T2 must not cause such a "shared file" conflict!

When the build breaks in stage 0 or 1 because of a command not found on your system you may need to build the package containing the command manually (see the section called “Building a Required Package Manually” for more information on building packages by hand).

Older versions of mount do not accept the '--bind' option. Either update mount to a newer version or change the '--bind ' option to '-o bind'.

Another issue regarding building is that you need quite a bit of free disk space. After downloading all packages (2GB) you need another 5 Gb to build everything. Thereafter you'll need enough space to install the newly built system on.

Non-T2 installation take a little more preparation in general.

The most important reason being that some current Linux distributions like RedHat or SuSE contain broken versions of one of the intensively used utilities like sed, awk, tar or bash.

The first time you start building T2 on a non-T2 distribution it may be wise not to build in your normal working environment because it may require up-to-date versions from sed, awk, tar or bash, but to dedicate a special build host.

If you have enough disk space, is might be easier to download one of the T2 rescue binaries (<; 100 Mb) which give you a full build system. Install and boot into it and you are almost done. It may be even easier if you have a binary T2 image on CD-ROM and just install it. T2 builds easily on an existing T2 installation.

If you don't succeed rebuilding your kernel and booting up because of one reason or another there is one more strategy. Download the T2 floppy images so you can have a look at your disk partitions.

To get a build system up to date for downloading and building T2 it is sometimes necessary to update utilities like bash or the \package{coreutils} package on your build system.

If T2 is already installed and all tools are up-to-date for Download and Build-Pkg you are in luck - just use them. If not, you need to download and build the package manually. In that case it is wise to check the T2 build description and configuration of the package:

First locate the package in one of the repositories:

ls package/*/coreutils

which most probably will yield: 'package/base/coreutils'. You should then use the 'D' tag in its '.desc' file to fetch the tar-ball.

As the next step you should verify if a '.conf' file is present and uses some important build configuration which you should use in your own manual build, too.

Finally you build the package, for example like:

tar xvfI coreutils-5.0.tar.bz2 cd coreutils-5.0 ./configure --prefix=/usr make make install

Additionally you might like to use '--prefix=/usr/local' or '--prefix=/opt/package' to not mess you build system and apply patches from the packages configuration directory in order to fix important bugs.

With every version of T2 you will sometimes encounter the phenomenon of broken packages. This is result of the evolution path: packages get updated and new features, architectures, OS kernels or alternative C libraries added. Some combinations might just not yet build or new regressions appear as a result of related changes. Package might fail to build because of dependency changes, or just bugs.

T2 provides some useful tools to help you fix broken builds. When a package fails to build you'll see the a package build directory src.packagename.uniqu-id remaining in your T2 tree. This contains the full build tree for a package until the point the compile stopped. In this tree you'll find a file named debug.sh, when run it will place you in the shell inside the chroot sandbox with exactly the same environment and wrappers when the package was build (among others the debug.sh sources the debug.buildenv, which contains the environment).

In order to debug the failure you usually change into the package directory:

cd some_pkg-$ver

and then run make with the arguments used by T2 build:

eval $MAKE $makeopt

or if the it failed at install time:

eval $MAKE $makeinstopt

Note

If the package is not based on classic Makefiles this step will obviously differ.

In order to modify files and create a patch with the differences at the end two helpers are provided: fixfile will create a backup file (with the extension .vanilla) and run an editor on the file, while fixfilediff can be used to obtain the differences at the end of the debug session:

fixfile Makefile fixfilediff

The newly created patch can then placed in the package directory with the .patch extension in order to apply it automatically:

cd .. fixfilediff > ../package/repository/package/fix-topic.patch

Note

In this example 'cd ..' is used to change back to the directory where the package was extracted in. This is necessary as the T2 build-system applies the patch with 'patch -p1' and thus the patch program removes the first directory name from the filesnames inside the patch.

Also the directory name is preferred in favour of just a . as first directory name in the patch as usually nicely indicates for which package version the patch was created for.

If you get stuck with a cross compile, or one of the more minimal C libraries or very minimal package selection, try building a standard 'no special optimisation, no frills' configuration, first. The major target configurations get build (and therefore tested) by most people and you can get used to T2 before you continue with a more specialized configuration.

If this fails check, the T2 mailing list for messages/queries regarding the failure.

It is useful to configure T2 for writing to terminal whilst doing the build: In scripts/Config select:

[*] Retry building broken packages [ ] Always clean up src dirs (even on pkg fail) [*] Print Build-Output to terminal when building

Or just running tail -f on the current log file.

In addition one can run watch in a different terminal on the list of files in ./build/default-*/root/var/adm/logs:

watch -d ls -lt build/default-*/root/var/adm/logs

Recent versions of T2 support some form of a distributed build in a cluster using distcc and icecream.

For less esoteric setups simply using RAID 0 (striping mode) using two IDE drives over two IDE channels speeds a build up significantly. This is because building packages is IO intensive.

An obvious optimisation is to select a target which builds the fewest packages, exclude building unneeded packages and to skip the final rebuild (stage 9).

If your build process should allow other users to make full use of the system, you can set the nice level to the lowest priority. e.g.

nice -n 19 scripts/Build-Target

secures the build process won't saturate the CPU when other users need it (IO resources may be exhausted temporarily though).

Table of Contents

- A Package

- Description File (.desc)

- Configuration File (.conf)

- Patch Files (.patch)

- Cache File (.cache)

- Package Creation in Practice

- Preparing

- Custom Code

- Testing

- Getting New Packages Into T2

- Compiler Optimisations

- The Automated Package Build

- Working with Variables

- Build System Hooks

- Command Wrapper

- Hacking with Bash

- Bash Version

- General

- Introduction

- How to Watch the Value of a Variable While Running a Script

- How to Interrupt Scripts Based on Conditions

- Exceptions

- How to Skip Part of a Script While Testing

- Convenient Variables

This chapter gives a quick run-through on the package system. For the information that actually goes into a package - the packaging - see the section called “Description File (.desc)”.

T2 is built out of shell scripts. These scripts access the files in ./config to build the packages from source.

These scripts run with quite some environment variables being set (see the section called “Environment Variables”).

There will come a day when you want to contribute a package to the T2 tree - because you find you are builting that package from source every time you do a clean install.

Mastering the package system is a good idea if you deploy similar configurations across machines. If that is your daily work your job title may be 'system administrator'.

Packages usually get quickly accepted into the T2 SVN trunk source tree and even if not local packages can still be useful.

The T2 Linux package system is pretty straightforward and easy to understand. Most users are system administrators and the choice of bash over make should be considered pragmatic - shell scripts are much easier to read, write and debug than Makefiles. For the functional parts Makefiles would include shell-scripts anyway.

Packages are stored in a logical tree of repositories where each package has its own directory. The package can be stored in repositories according to its type (i.e. base, gnome2, powerpc) or grouped by the maintainer.

The build system created for T2 Linux needs some meta information about each package in order to download and build it but also textual information for the end-user. For maintenance reasons we have chosen a tag based format in Text/Plain.

This section documents the t2.package.description tags format. You can also add additional tags, their names have to start with 'X-' (like [X-FOOBAR]).

Please use the tags in the same order as they are listed in this table and add a blank line where a new table section starts. Please use the X-* flags after all the other tags to ensure best read-ability. scripts/Create-DescPatch can help you here.

Table 5.1. T2 .desc Tags

| short name | long name | mandatory | multiple |

|---|---|---|---|

| COPY | (x) | x | |

| I | TITLE | x | |

| T | TEXT | x | x |

| U | URL | x | |

| A | AUTHOR | x | x |

| M | MAINTAINER | x | x |

| C | CATEGORY | x | |

| F | FLAG | ||

| R, ARCH | ARCHITECTURE | ||

| K, KERN | KERNEL | ||

| E, DEP | DEPENDENCY | x | |

| L | LICENSE | x | |

| S | STATUS | x | |

| V, VER | VERSION | x | |

| P, PRI | PRIORITY | x | |

| CV-URL | |||

| CV-PAT | |||

| CV-DEL | |||

| O | CONF | ||

| D, DOWN | DOWNLOAD | x | |

| S, SRC | SOURCE |

The meaning of all the different tags are:

[COPY]

With the [COPY] tag the T2 SDE and probably additional copyright information regarding the sources of this particular package are added. The copyright text is automatically (re-)generated by scripts/Create-CopyPatch from time to time, preferably on each checkin of modified or new files.

The automatically generated text is surrounded by T2-COPYRIGHT-NOTE-BEGIN and T2-COPYRIGHT-NOTE-END - additional lines containing the word 'Copyright' and additional information around these tag stay untouched.

[I] [TITLE]

A short description of the package. Can be given only once.

[T] [TEXT]

A detailed package description. It can be used multiple times to form a long text.

[U] [URL] http://foo.bar/

A URL related to the package, for example the homepage. It can be specified multiple times.

[A] [AUTHOR] Rene Rebe <rene@exactcode.de> {Core Maintainer}

The tag specifies the original author of the package. The <e-mail> and the {description} are both optional. Normally the main author should be listed, but multiple tags are possible. To keep the file readable, not more then four tags should be listed normally. At least one <e-mail> specification should be present to make it easy to send patches upstream.

[M] [MAINTAINER] Rene Rebe <rene@exactcode.de>

Same format as [A] but contains the maintainer of the package.

[C] [CATEGORY] console/administration x11/administration

The categories the package is sorted into. A list of possible categories can be found in the file PKG-CATEGORIES.

[F] [FLAG] DIETLIBC

Special flags to signal special features or build-behavior for the build-system. A list of possible flags can be found in the file PKG-FLAGS.

[R] [ARCH] [ARCHITECTURE] + x86

[R] [ARCH] [ARCHITECTURE] - sparc powerpc

Usually a package is built on all architectures. If you are using [R] with '+' the package will only be built for the given architectures. If you use it with '-' it will be built for all except the specified architectures.

[K] [KERN] [KERNEL] + linux

[K] [KERN] [KERNEL] - minix

Usually a package is built on all kernels. If you are using [K] with '+' the package will only be built for the given kernel. If you use it with '-' it will be built for all except the specified kernels.

[E] [DEP] [DEPENDENCY] group compiler

[E] [DEP] [DEPENDENCY] add x11

[E] [DEP] [DEPENDENCY] del perl

When the keyword 'group' is specified all dependencies to a package in this group (compiler in the example) will get expanded to dependencies to all packages in this group.

The keywords 'add' and 'del' can be used to add or delete a dependency that can not be detected by the build-system automatically. E.g. a font package extracts files into a x11 package's font directories. Since the font package does not use any x11 package's files it does not depend on the package automatically and the dependency must be hard-coded. Use with care and only where it is really, REALLY, necessary.

[L] [LICENSE] GPL

This tag specifies the license of the package. Possible values can be found in misc/share/REGISTER.

[S] [STATUS] Stable

where 'Stable' also can be 'Gamma' (very close to stable), 'Beta' or 'Alpha' (far away from stable).

[V] [VER] [VERSION] 2.3 19991204

Package version and optional revision.

[P] [PRI] [PRIORITY] X --3-5---9 010.066

The first field specifies if the package should be built on default or not (X=on, O=off). The second and third field specify the stages and build-order for that package.

See the section called “Build Stages” and the section called “Build Priority” for a detailed explanation.

[CV-URL] http://www.research.avayalabs.com/project/libsafe/

The URL used by scripts/Check-PkgVersion.

[CV-PAT] ^libsafe-[0-9]

The pattern used by scripts/Check-PkgVersion.

[CV-DEL] \.(tgz|tar\.gz)$

The delete pattern for scripts/Check-PkgVersion.

[O] [CONF] srcdir="$pkg-src-$ver"

The given text will be evaluated as if it would be at the top of the package's *.conf file.

[D] [DOWN] [DOWNLOAD] cksum foo-ver.tar.bz2 http://the-site.org/

[D] [DOWN] [DOWNLOAD] cksum foo-ver.tar.bz2 !http://the-site.org/foo-nover.tar.bz2

[D] [DOWN] [DOWNLOAD] cksum foo-ver.tar.bz2 cvs://:pserver:user@the-site.org:/cvsroot module

Download the specified file using the URL obtained by concatenation of the second (file) and third (base URL) fields. If the checksum is specified (neither '0' nor 'X') the downloaded file is checked for correctness. The download URL is split like this, because the first filename is also used for T2 mirrors and checkouts from version controls systems need a target tar-ball name.

The checksum can initially be '0' for later generation or 'X' to specify that the checksum should never be generated nor checked, for example due to checkouts from version control systems.

When a '!' is specified before the protocol a full URL must be given and is used, but the obtained content is rewritten to the filename specified in the second, filename field. This is useful to add a version to unversioned files or just to give filenames a better meaning and avoid conflicts.

Aside the normal ftp://, http:// and https:// protocols, cvs://[4], svn://, svn+http://, svn+https:// as well as git:// are supported at the time of writing.

The package maintainer can use scripts/Create-CkSumPatch to generate the checksum if it is initially set to 0 and downloaded, like:

scripts/Create-CkSumPatch xemacs patch -p1 < cksum.patch

or in short just:

scripts/Create-CkSumPatch xemacs | patch -p1

[SRC] [SOURCEPACKAGE] pattern1 pattern2 ...

This will enable build_this_package function to build the content of more than one tarball, all those files matching the patterns. Do not put the extension of the tarballs (e.g. tar.gz) into this tag, as it might be transformed to .bz2 by the build system automatically! A pattern to match the needed tarball is enough, for example:

[SRC] mypkg-version1 gfx [D] cksum mypkg-version1.tar.gz http://some.url.tld [D] cksum mypkg-gfx-version2.tbz2 http://some.url.tld [D] cksum mypkg-data-version3.tar.bz2 http://some.url.tld

This would run the whole build cycle with mypkg-version1.tar.bz2 and mypkg-gfx-version2.tbz2 but not with mypkg-data-version3.tar.bz2. As the parameter are patterns to match, a simple '.' is enough to match all specified download files.

[COPY] --- T2-COPYRIGHT-NOTE-BEGIN --- [COPY] This copyright note is auto-generated by scripts/Create-CopyPatch. [COPY] [COPY] T2 SDE: package/.../python/python.desc [COPY] Copyright (C) 2004 - 2006 The T2 SDE Project [COPY] Copyright (C) 1998 - 2004 ROCK Linux Project [COPY] [COPY] More information can be found in the files COPYING and README. [COPY] [COPY] This program is free software; you can redistribute it and/or modify [COPY] it under the terms of the GNU General Public License as published by [COPY] the Free Software Foundation; version 2 of the License. A copy of the [COPY] GNU General Public License can be found in the file COPYING. [COPY] --- T2-COPYRIGHT-NOTE-END --- [I] The Python programming language [T] Python is an interpreted object-oriented programming language, and is [T] often compared with Tcl, Perl, Java or Scheme. [U] http://www.python.org/ [A] Stichting Mathematisch Centrum, Amsterdam, The Netherlands [M] Rene Rebe <rene@t2-project.org> [C] base/development [L] OpenSource [S] Stable [V] 2.5 [P] X -----5---9 112.000 [D] 4007565864 Python-2.5.tar.bz2 http://www.python.org/ftp/python/2.5/

The configuration file gets executed during build time. The build system is pretty smart - for a lot of standard compiles it is not necessary to write such a build script. Additionally standard and cross-compilation switches get passed on to configure, make, gcc and friends automatically. Only if modifications to this automatically detected values are needed, a .conf file needs to be created.

Since the possibilities in .conf files have no limits please refer to the the section called “Environment Variables” and later sections.

# --- T2-COPYRIGHT-NOTE-BEGIN ---

# This copyright note is auto-generated by scripts/Create-CopyPatch.

#

# T2 SDE: package/.../python/python.conf

# Copyright (C) 2004 - 2006 The T2 SDE Project

# Copyright (C) 1998 - 2004 ROCK Linux Project

#

# More information can be found in the files COPYING and README.

#

# This program is free software; you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation; version 2 of the License. A copy of the

# GNU General Public License can be found in the file COPYING.

# --- T2-COPYRIGHT-NOTE-END ---

python_postmake() {

cat > $root/etc/profile.d/python <<-EOT

export PYTHON="$root/$prefix/bin/python"

EOT

}

runpysetup=0

var_append confopt " " "--enable-shared --with-threads"

Patch files are stored with the postfix .patch in the package directory and are applied automatically during the package build.

There are a few ways to control applying patches conditionally by adding an additional extension:

.patch.cross

Patches with .patch.cross are only applied in the cross compile stages 0 and 1.

.patch.cross0

Patches with .patch.cross0 are only applied in the toolchain stage 0.

.patch.$arch

Patches with .patch.$arch, are only applied when compiled for the CPU architecture matching $arch.

.patch.$xsrctar

Useful for packages with multiple tarballs, as it allows matching patches to the individual files. Patches matching the beginning of the extracted source package filename are applied.

If more sophisticated conditional control is needed for a patch, it can be named completely differently, such as .diff or .patch.manual, and dynamically injected to the patchfiles variable in the package's .conf:

if condition; then

var_append patchfiles $confdir/something.diff

fiThe .cache file holds automatically generated information about the package such as dependencies, build time, files, size ...

Packages committed to the T2 Linux source tree get build on a regular basis on test systems by the tree maintainer. For each of such regression tests a .cache file is generated and placed into the package directory. When the package failed this is marked in the file and the tail of the ERROR-LOG is also inserted. The main use of a cache file during the build process is to resolve dependencies and to queue the packages during a Cluster-Build. Cache file can look like this:

[TIMESTAMP] 1133956688 Wed Dec 7 12:58:08 2005 [BUILDTIME] 80 (9) [SIZE] 47.48 MB, 4326 files [DEP] bash bdb bdb33 binutils bluez-libs bzip2 coreutils [DEP] diffutils findutils gawk gcc gdbm glibc grep [DEP] libx11 linux-header make mktemp ncurses net-tools [DEP] openssl patch readline sed sysfiles tar tcl [DEP] tk util-linux xproto zlib

The dependencies included in the .cache file are only the direct or explicit dependencies of this single package. The recursive, indirect or implicit dependencies of the packages' dependencies are generated on the fly in e.g. Create-PkgQueue or Emerge-Pkg.

When 'Disable packages which are marked as broken' is selected in the config, a package will not be built if a .cache file does not exist, or indicates an error.

To get a grip on how the Build scripts work the best thing to do is take one of the smaller standard packages and build them by hand. Start changing parameters and see what happens in /var/adm/logs and the ./src trees.

scripts/Build-Pkg package

By studying existing config files you'll find there are a number of interesting features.

Many standard configure type build doesn't even need scripting. T2 does that all for you.

When packages need special configuration parameters or command sequences write them directly into the shell script.

Usually you have already compiled and installed the program you want to convert to a T2 extension package.

Find and download the tarballs you need in a directory for that purpose.

At this point it is worth checking what the installation documents say. For example you may need to use configuration options like:

--enable-special-feature

Once you have downloaded the latest packages they are most likely not in the bz2 format. If you have slow internet connection and want to prevent a second download you may want to copy the tar balls directly into the archive. For example:

gunzip package.tar.gz bzip2 package.tar mkdir -p ./download/repository/package cp package.tar.bz2 ./download/repository/package

In case your package goes beyond standard configure or has its own non compliant installation procedure some scripting is required.

It is quite possible you need to do some patching. In the case of xfig, XPM was needed and after copying the original Imakefile to Imakefile.old it was changed and a diff was run:

diff -uN ./Imakefile.old ./Imakefile

And the output can be added as a patch into the configuration directory. The T2 Linux build scripts will automatically apply the patches (see the section called “Patch Files (.patch)”).

Before wrapping up your extension and sending it in test it exhaustingly through several package builds and make sure:

all cp, rm, ln etc. commands are forced (for rebuilds and updates)

it builds

it builds a 2nd time (for rebuilds and updates)

it works correctly

man pages etc. go to the right locations

all the other files in the file-list are at the correct location

you included the resulting .cache file (the section called “Cache File (.cache)”) so the initial dependencies are known

And when you really want to make the package perfect:

the package honors the build variable $prefix so the user or e.g. a target is able to install it to any location required

it cross builds, that is picks the correct compiler and is DESTDIR aware

The first time you contribute a package you may want to send it, as a patch, to the T2 community. Continuous committees might get write access to the relevant version control system - otherwise patches need to be sent in for modifications.

If you find a bug in a package, scripts or documentation it is usually a good idea to share your findings with others.